Last week, I came across an interesting paper from the Workshop on the Economics of Information Security (WEIS), held in Boston on June 3-4, 2019. It examined the cost of the different strategies that organizations use to patch software vulnerabilities across their enterprise. The authors used data from Mitre’s CVE (Common Vulnerability Enumeration) database from 2009 to 2018 as the basis for their study. The data-set contained close to 76K vulnerabilities, but it should come as no surprise that not all these vulnerabilities are exploited by hackers. Indeed, hackers only took advantage of around 4200 of them — roughly 5.5% – to launch attacks. If enterprises could somehow predict which vulnerabilities fall in the likely-to-be-exploited category, they could be more effective in prioritizing the roll out of patches.

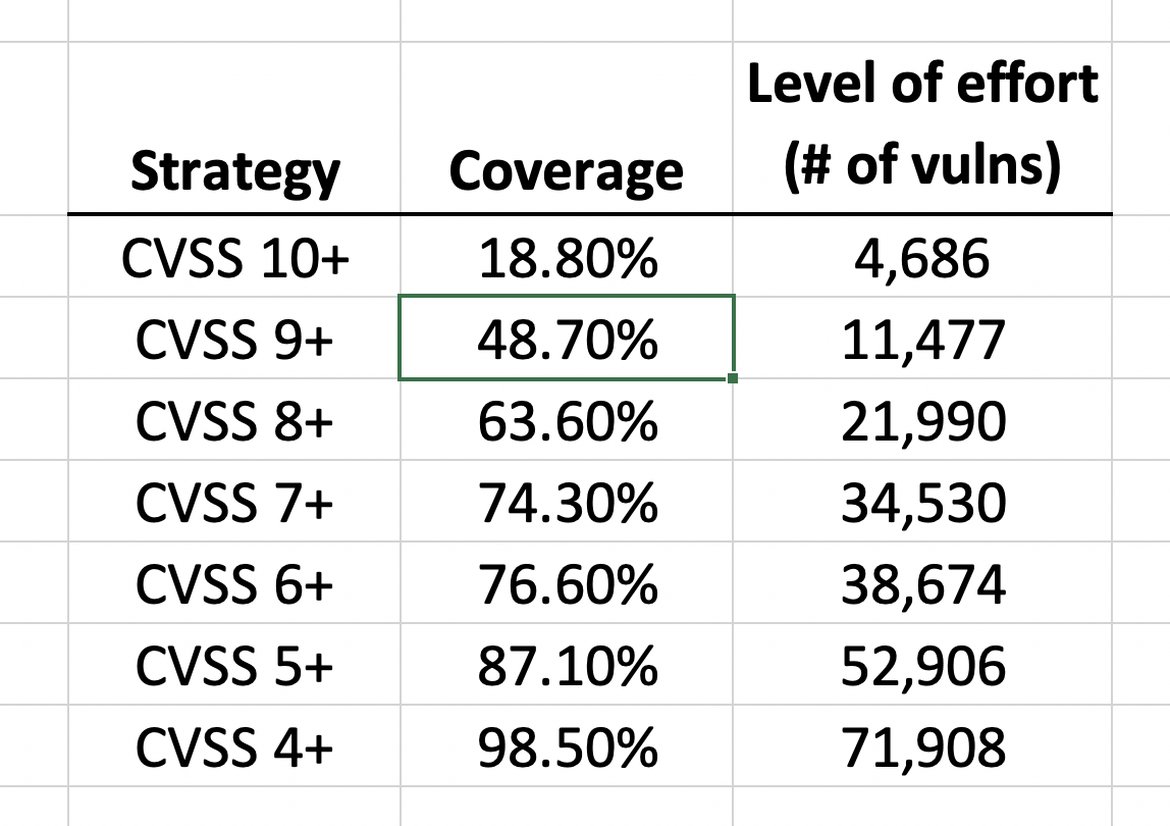

Predicting which vulnerabilities are likely to be exploited is no easy task and the most popular approach in the industry is to use the Common Vulnerability Scoring System (CVSS) which was first developed in 2003. It is currently at version 3.1 and has become the de-facto international standard for measuring the severity of a vulnerability. You can find the details of the formula here, but for now, suffice it to say that CVSS provides a numeric score with a range from 0 (lowest severity) to 10 (highest severity). One common policy for organizations is to require patching of vulnerabilities above a given level, within a certain timeframe. For instance, the US Department of Homeland Security (DHS) requires critical severity vulnerabilities (CVSS 9-10) to be patched within 15 days and high severity vulnerabilities (CVSS 7-8) to be addressed in 30 days.

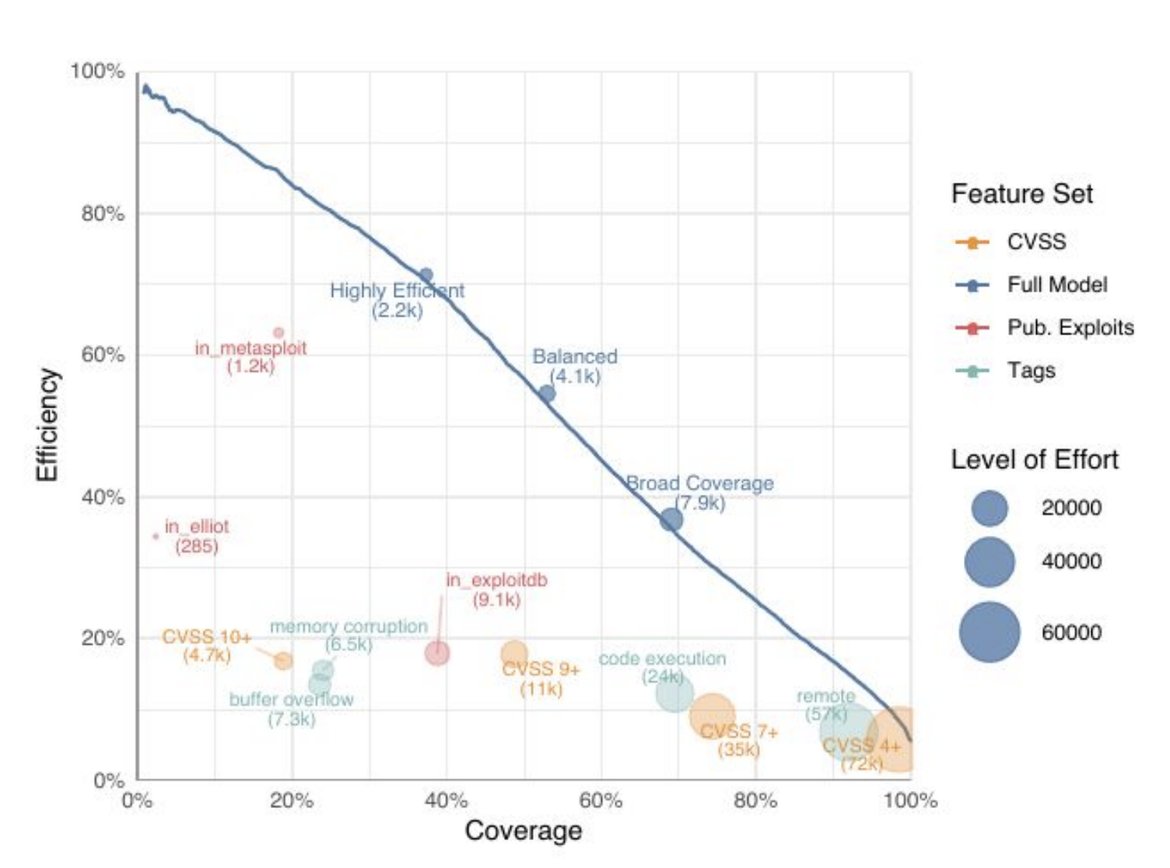

The table shows the coverage of the DHS strategy, where coverage is defined as the percentage of vulnerabilities that are patched relative to all the vulnerabilities that are known to be exploited in the wild. For example if there are 100 vulnerabilities that have been exploited and a particular strategy calls for 20 to be patched, then the coverage is 20%. For the data-set used in the study, the CVSS 9+ strategy provides a coverage of 48.7%, whereas the CVSS 7+ strategy results in close to 75% coverage. Note that the latter requires a large number of vulnerabilities (34.5K out of the 76K in the data-set) to be patched which can end up becoming a significant effort for a large enterprise.

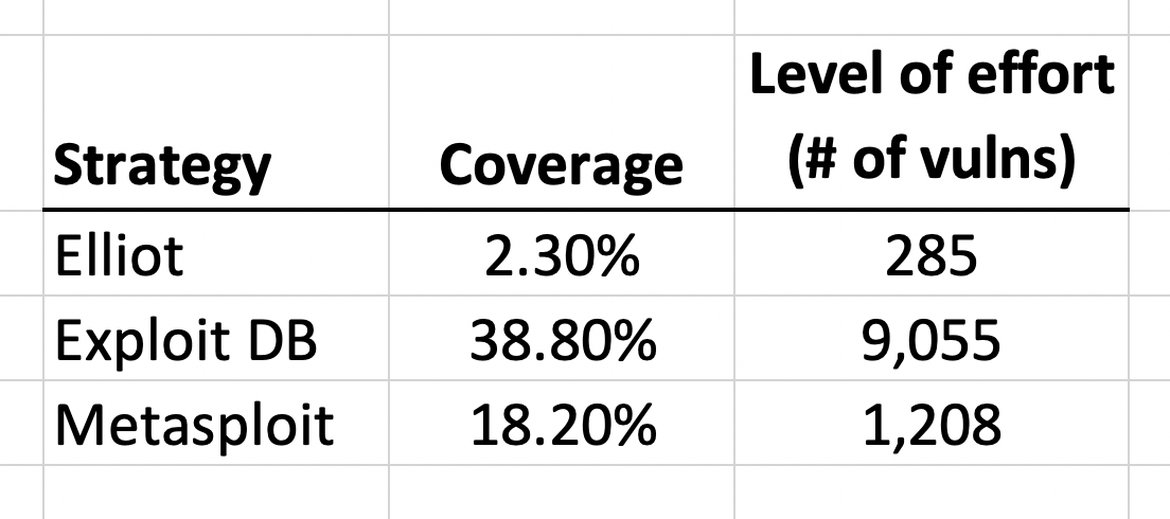

The numbers get even more prohibitive if you need to patch lower severity CVSS, as the Payment Card Industry Data Security Standard (PCI-DSS) requires. This begs the question of whether there is a more efficient way to prioritize vulnerability patching. It turns out that there is a significant correlation of real world attacks with vulnerabilities that have published exploits. The authors examine three major exploit frameworks, Elliot, Exploit DB and Metasploit to see if they are reasonable proxies for vulnerabilities that are exploited in the wild.

The table shows the coverage data for these.The Metasploit framework exploits around 1.2K vulnerabilities and provides a coverage of 18%. Exploit DB has a higher coverage of 39% and includes 9K vulnerabilities in its framework. However, this is still a rather low coverage and will not be a sufficient strategy by itself for safeguarding the enterprise.

The authors conclude by melding multiple heuristics like the two described here with a machine learning model to come up with a recommendation engine for vulnerability management. They extract features from different classifications of these vulnerabilities and combine them in a decision tree that gives a score representing the likelihood of the vulnerability being exploited. They use a popular gradient boosting library called XGBoost to optimize the decision tree and the graph below sums up the results.